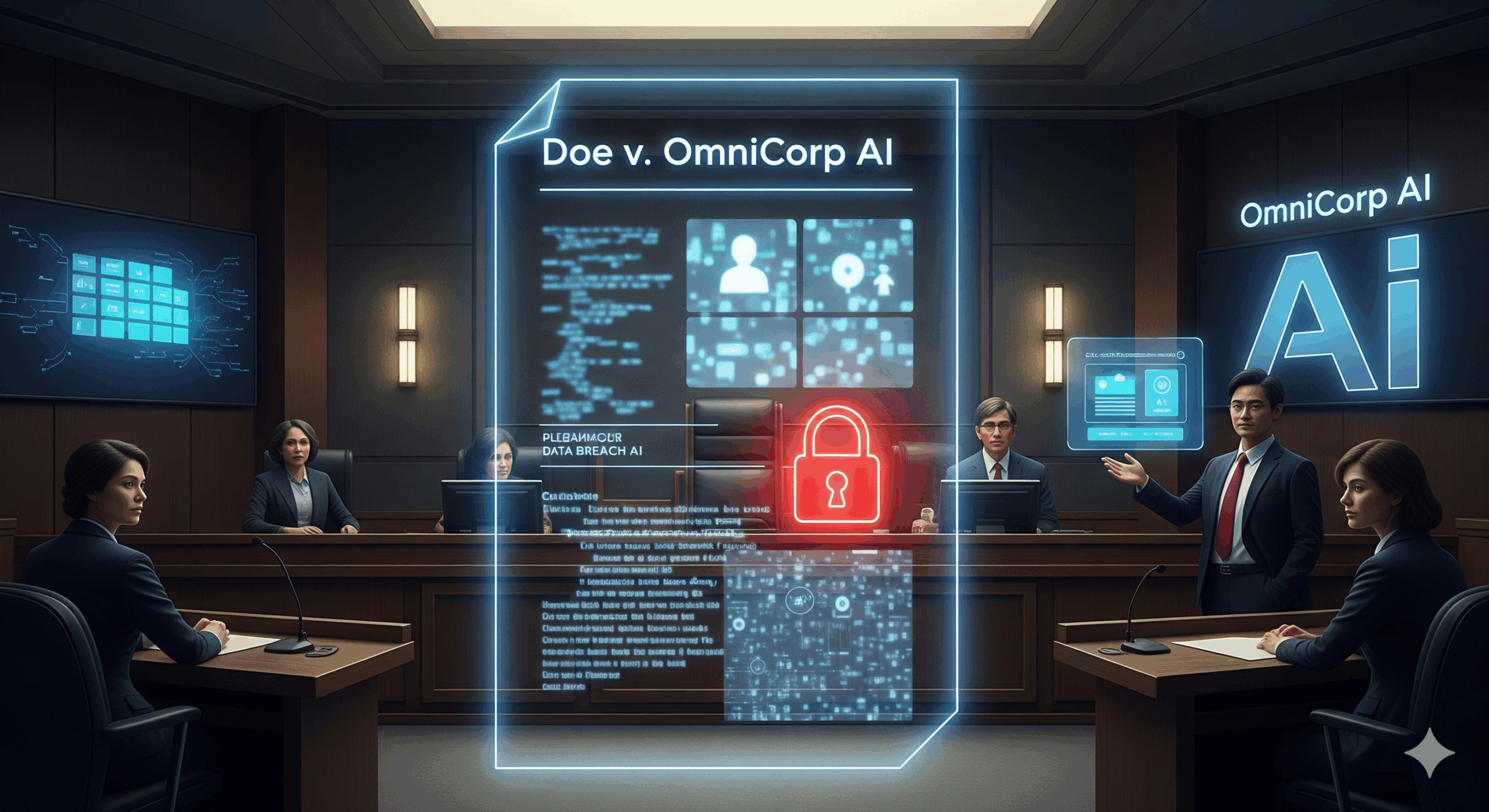

Otter.ai now faces a significant class-action lawsuit. This legal challenge alleges the company secretly records users’ confidential conversations. This occurs on popular platforms like Zoom, Google Meet, and Microsoft Teams. The suit claims Otter.ai engages in this practice without obtaining proper consent from all parties involved. Consequently, this action may violate both federal and state privacy laws. The lawsuit names Justin Brewer as the lead plaintiff, as his own confidential meeting was allegedly recorded by the service. Brewer asserts that he was completely unaware of the recording taking place. Furthermore, he claims he never gave his consent for this action. The lawsuit, therefore, seeks to represent millions of users. These users had their private conversations intercepted. Their data was also allegedly used for training the company’s Artificial Intelligence models.

This case could establish a new precedent for the entire technology industry. It affects how companies must handle user consent moving forward. The legal action asserts a profound breach of privacy. It also argues the company is not sufficiently transparent about its data practices. Ultimately, the suit focuses on federal wiretapping laws. It also references the California Invasion of Privacy Act. This legal battle represents a critical moment for digital privacy. It may force widespread changes in how we interact with AI-powered services. Moreover, it casts a long shadow over the future of automated transcription. The lawsuit highlights a fundamental conflict between innovation and individual rights. It questions whether convenience should ever come at the expense of privacy.

Furthermore, the lawsuit alleges that Otter.ai’s “Notetaker” tool joins meetings without affirmative consent from all participants. Specifically, if a meeting host has integrated their account with Otter, the AI may join automatically. As a result, non-account holders have no say in the matter. They are not asked for permission to be recorded. This lack of explicit notification is a central point of the legal complaint. Therefore, the suit claims Otter.ai tries to shift its legal responsibility. It outsources the burden of obtaining consent to its users. This practice, consequently, places individuals in a precarious position. They might inadvertently violate their own company’s data policies. In addition, they could expose sensitive information. The complaint also highlights that Otter.ai’s privacy policy mentions using “de-identified” audio for training. Conversely, the lawsuit points out that research has shown these de-identification techniques can be ineffective.

Ultimately, the plaintiffs argue Otter.ai benefits financially from these alleged practices. The company’s revenue and market position are tied to the effectiveness of its Artificial Intelligence. This effectiveness, in turn, relies on vast amounts of training data. As a consequence, the lawsuit claims the company has a strong incentive to collect as much data as possible, regardless of consent. The legal challenge seeks significant financial penalties. It also, however, aims for a more profound outcome: a change in business practices.

Courts are increasingly moving away from just financial remedies. They are demanding structural reforms. This means the case could compel Otter.ai to completely redesign its consent mechanisms. It might force the company to implement clearer, more transparent user experiences. This would ultimately give users more control over their data. Consequently, this case serves as a warning. It warns other companies that rely on user data for their Artificial Intelligence systems. They must be prepared for heightened scrutiny. The stakes are incredibly high for the future of digital privacy. This case could redefine the relationship between users and the Artificial Intelligence tools that have become so deeply integrated into our daily lives.