Once upon a time, we worried about Photoshop. Today, the concern is far more sci-fi. Enter deepfake technology, a digital magic trick that lets anyone wear anyone else’s face. It’s thrilling. It’s terrifying. And most importantly, it raises some serious ethical questions.

So, where do we draw the line between fun and fraud?

What Exactly Is a Deepfake?

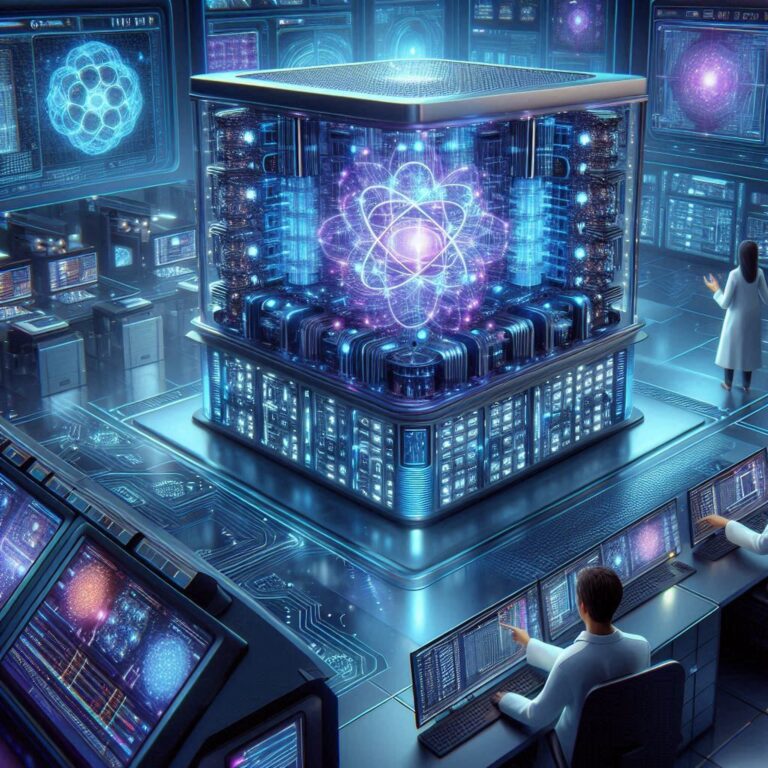

A deepfake is a hyper-realistic synthetic media clip, often video or audio, created using AI. It swaps faces, mimics voices, and replicates movements with uncanny accuracy. In other words, it’s a superpowered version of digital impersonation.

The technology behind it relies on deep learning algorithms. These algorithms analyze thousands of real images or sounds to create a believable fake. Once limited to researchers and movie studios, deepfake tools are now available to just about anyone with an internet connection.

The Rise of the Deepfake Era

Let’s not sugarcoat it: deepfake content is exploding. Social media has accelerated its spread. TikTok users turn into celebrities for laughs. YouTube creators use it for parody. Even politicians have seen themselves speaking words they never said.

In the U.S., deepfake content has entered elections, entertainment, and even courtrooms. In 2024, a fake robocall mimicking President Biden’s voice made national news. It asked voters to skip the primaries. The call was fake. The impact was real.

The Good, the Bad, and the Nightmare Fuel

The Good

Not all deepfakes are malicious. For example, Hollywood uses them for visual effects, like de-aging actors or bringing back characters posthumously. In addition, education and accessibility benefit, too. Specifically, AI-generated voiceovers help people with speech disorders communicate.

Furthermore, advertising also uses the technology creatively. Brands can now generate personalized messages using influencers’ likenesses. It’s cool, a bit creepy, but mostly harmless—so far.

The Bad

Unfortunately, deepfakes also have a dark side. For instance, one of the worst offenders is non-consensual adult content, which accounts for the majority of harmful deepfake videos online. As a result, victims, often women, find their faces pasted onto explicit videos without their permission.

It’s a digital assault, and sadly, it’s happening every day.

In addition, political manipulation is another danger. Deepfakes can sway public opinion by putting words into the mouths of leaders. Consequently, in the wrong hands, it becomes a weapon of misinformation.

The Truly Terrifying

Imagine receiving a video of a loved one asking for money. Their voice, face, and mannerisms all check out. Would you question it? That’s the scariest part: deepfakes can target you personally.

Scammers are using AI to clone voices. Fraudsters already tricked a company CEO into transferring millions based on a fake call from his “boss.” The tech makes old-school phishing look like child’s play.

Why This Matters for America

In a country where “fake news” already clouds trust, deepfake technology adds fuel to the fire. Therefore, Americans pride themselves on free speech, transparency, and informed decision-making. However, deepfakes threaten all three.

This leads us to ask: Can a democracy survive if people no longer trust what they see and hear?

Where Should We Draw the Line?

Ethics is all about boundaries. The challenge with deepfake content is that the lines keep moving. Here’s where we may consider drawing them:

1. Consent Is Non-Negotiable

Nobody should appear in a deepfake without giving permission. Period. Whether it’s for fun, profit, or political satire, consent must come first.

2. Disclosure Is Essential

If something is AI-generated, say so. Add watermarks. Include labels. Viewers deserve to know when a video is synthetic.

3. Ban Harmful Content

Certain deepfake uses should be outright illegal, especially revenge porn, political misinformation, and scams. The First Amendment matters, but so does protecting people from abuse.

4. Regulate, But Don’t Overreach

The government has a role, but not all solutions are legal ones. Overregulation could stifle creativity. A balanced approach is key.

5. Tech Companies Must Step Up

Platforms like YouTube, X (formerly Twitter), and Meta need stronger filters. They also need transparent policies and clear takedown processes.

What’s the Law Say?

As of 2025, several U.S. states have introduced deepfake laws. Texas bans deepfakes in elections. California prohibits using someone’s likeness in pornographic content. New York offers some civil protections. But nationwide legislation is still patchy.

Congress has considered bills like the DEEPFAKES Accountability Act, but progress remains slow. Technology moves faster than lawmakers. That’s a problem.

Can Technology Fix What It Broke?

Ironically, yes. In fact, AI can detect AI. For instance, new tools can scan content for signs of manipulation. Furthermore, companies like Microsoft and Adobe are working on verification systems to flag deepfakes before they go viral. In addition, blockchain may also help. By tracking the origin and edits of digital media, we can separate real from fake.

However, detection isn’t perfect. This is because for every defensive advancement, bad actors find new workarounds.

How Can You Spot a Deepfake?

There’s no foolproof method, but here are some clues:

- Weird blinking or facial movements

- Audio that doesn’t sync with the lips

- Blurry edges or inconsistent lighting

- Too-perfect speech with robotic tone

When in doubt, check trusted news sources or reverse-search the video.

Final Thoughts: Proceed with Caution (and a Sense of Humor)

Deepfake technology isn’t going away. In fact, it’s powerful, accessible, and increasingly realistic. Therefore, it can entertain, inform, deceive, or destroy, depending on who wields it. Drawing the ethical line means protecting privacy, preventing abuse, and preserving trust in public information. At the same time, we shouldn’t panic every time we see a celebrity saying something weird on TikTok. Like any powerful tool, deepfakes reflect their creators’ intent. A hammer can build a house or break a window; similarly, the same goes for synthetic media.

So, the next time you see a video that seems too wild to be true, ask yourself: Is it real, or a really convincing lie?